The Role of Tech Startups in the Growth of the eBike Industry

The eBike industry has seen rapid growth in recent years, driven by increasing awareness of environmental issues, the convenience of eBikes for commuting, and advancements in technology. eBikes offer a sustainable alternative to traditional transportation methods, making them an attractive option for eco-conscious consumers. As the demand for eBikes rises, tech startups play a pivotal role in driving innovation and expanding the market. These startups are not only introducing cutting-edge technologies but also making eBikes more accessible to a wider audience.

This article explores how tech startups contribute to the growth of the eBike industry.

1. Innovation in eBike Design

Tech startups are leading the charge in eBike design innovation, creating models that are both aesthetically pleasing and highly functional. One significant trend is the development of carbon fiber eBikes. These bikes are popular because they are lightweight yet durable, offering a smoother and more efficient ride compared to traditional materials. Carbon fiber’s strength-to-weight ratio makes it ideal for eBikes, providing high performance without compromising on weight.

These startups focus on making eBikes more attractive and practical for everyday use. They incorporate sleek designs, modern aesthetics, and ergonomic features that appeal to a broad range of consumers. By using advanced materials like carbon fiber, these companies can produce high-quality bikes that meet the demands of both casual riders and serious cyclists. The innovative design of the carbon fiber electric bike is instrumental in attracting new customers and encouraging the adoption of eBikes as a mainstream mode of transportation.

2. Development of Smart eBike Technologies

Another area where tech startups are making a significant impact is the integration of smart technologies into eBikes. Modern eBikes come equipped with features like GPS navigation, smartphone connectivity, and advanced safety systems. These technologies enhance the user experience by providing real-time data, route optimization, and security features. For instance, GPS-enabled eBikes can offer turn-by-turn directions, making it easier for riders to navigate unfamiliar areas.

Smartphone connectivity allows riders to control various aspects of their eBike through dedicated apps. Users can monitor battery life, track their rides, and even customize their bike’s performance settings. Advanced safety systems, such as anti-theft alarms and automatic crash detection, add an extra layer of security and peace of mind for riders.

3. Battery and Motor Advancements

Tech startups are also at the forefront of advancements in eBike battery and motor technologies. Improvements in battery life, charging times, and motor efficiency are critical for making eBikes more practical and appealing. Startups are developing batteries that offer longer ranges, allowing riders to travel greater distances on a single charge. Faster charging times mean that eBikes can be quickly recharged during short breaks, increasing their convenience for daily use.

Additionally, innovations in motor technology are enhancing the performance of eBikes. More efficient motors provide smoother acceleration and better handling, making eBikes more enjoyable to ride. These advancements also contribute to the overall reliability and longevity of the bikes. By focusing on improving these core components, startups are addressing some of the key concerns that potential eBike users may have, such as range anxiety and maintenance issues. This focus on battery and motor improvements is essential for the continued growth and adoption of eBikes.

4. Market Expansion and Accessibility

Tech startups are instrumental in making eBikes accessible to a broader audience. By developing cost-effective models and exploring innovative financing options, these companies are reducing the entry barriers for consumers. For instance, startups are working on reducing production costs without compromising quality, enabling more people to afford high-quality eBikes. These efforts are crucial in promoting eBikes as a viable alternative to traditional transportation methods.

Furthermore, startups are targeting different market segments, including urban commuters, recreational riders, and delivery services. By catering to diverse needs, they ensure that eBikes are appealing to a wide range of users. Urban commuters benefit from the convenience and efficiency of eBikes, while recreational riders enjoy their versatility and ease of use. Delivery services, on the other hand, find eBikes to be an eco-friendly and cost-effective solution for their logistical needs.

5. Environmental and Economic Impact

The environmental benefits of eBikes are substantial, and tech startups are at the forefront of promoting sustainable transportation solutions. eBikes produce zero emissions, making them an eco-friendly alternative to cars and motorcycles. By reducing reliance on fossil fuels, eBikes help decrease air pollution and carbon footprints. Startups are not only developing environmentally friendly products but also educating consumers about the benefits of sustainable transportation.

In addition to environmental advantages, the growth of the eBike industry has a positive economic impact. The increasing demand for eBikes creates job opportunities in manufacturing, sales, and maintenance. Moreover, investments in eBike startups stimulate economic growth and innovation. By contributing to the development of green cities and reducing traffic congestion, eBikes play a crucial role in urban planning and sustainability efforts. The combined environmental and economic benefits underscore the significance of tech startups in advancing the eBike industry.

6. Future Trends and Innovations

The future of the eBike industry looks promising, with tech startups driving continuous innovation. Potential future trends include improved artificial intelligence (AI) integration, autonomous eBikes, and further advancements in materials and battery technology. AI could enhance eBike functionality, providing features like adaptive riding modes and predictive maintenance. Autonomous eBikes, though still in the experimental phase, have the potential to revolutionize urban mobility.

Material and battery technology will likely see significant advancements, resulting in lighter, more durable, and longer-lasting eBikes. Startups are already exploring new composites and battery chemistries to achieve these goals. Additionally, the integration of renewable energy sources, such as solar charging capabilities, could further enhance the sustainability of eBikes. As tech startups continue to push the boundaries of innovation, the eBike industry will evolve, offering even more efficient and eco-friendly transportation solutions.

Conclusion

Tech startups play a pivotal role in the growth and innovation of the eBike industry. From pioneering new designs and smart technologies to advancing battery and motor efficiency, these companies are transforming eBikes into a mainstream transportation option. By making eBikes more accessible and promoting their environmental and economic benefits, startups are driving significant market expansion. Looking ahead, the continuous innovation from these startups promises an exciting future for the eBike industry, with even more advanced and sustainable solutions on the horizon. Supporting and investing in these innovative companies is essential for the continued growth and success of the eBike industry.

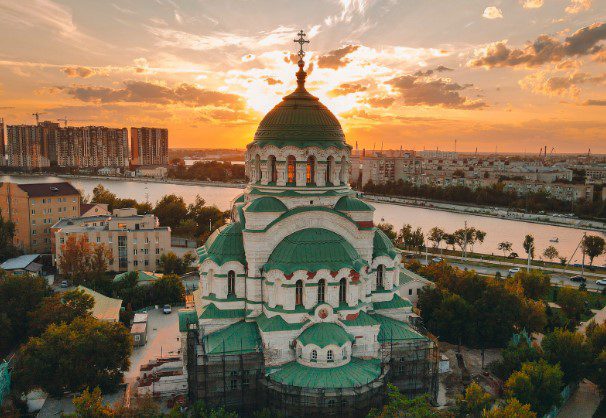

Pros and Cons of Living in Sofia

Pros and Cons of Living in Sofia Varna Best Neighborhoods to Live In

Varna Best Neighborhoods to Live In